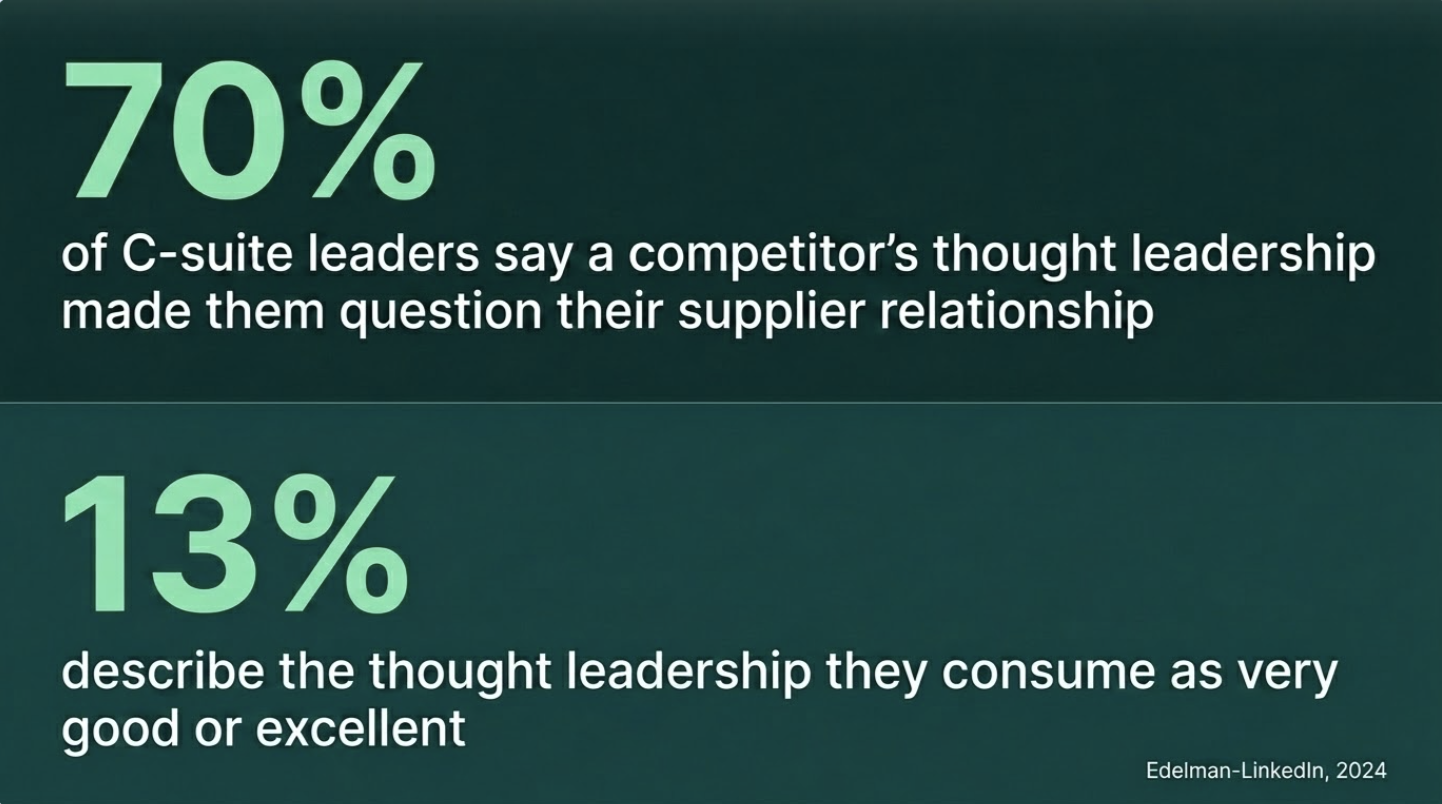

The evidence that this matters is not theoretical. According to the 2024 Edelman-LinkedIn B2B Thought Leadership Impact Report, which surveyed nearly 3,500 global business executives, 70% of C-suite leaders say a competitor's thought leadership has led them to question whether they should continue working with an existing supplier. The same report found that only 13% of decision-makers describe the thought leadership they consume as very good or excellent. The gap between how much thought leadership is being produced and how much of it is working is large - and closing it requires more than better writing.

Meanwhile, the volume problem has accelerated. HubSpot's 2024 State of Marketing data found that the share of marketers using AI for content operations nearly doubled in a single year, from 35% to 64%. The share of marketers creating blog content without any AI involvement dropped from 65% to 5% in two years. The content environment has never been more crowded. The average piece of brand thought leadership has never been harder to distinguish from a competitor's.

There is a structural fix. A single, well-designed 500-person nationally representative consumer survey produces a finding no competitor can replicate. Brands that commission their own research do not just produce better content - they produce content that is structurally impossible for anyone else to publish. And the barrier that made this prohibitive - cost and timeline - is not what it used to be.

The Borrowed Research Trap Is Costing You Differentiation

Citing syndicated research is not a neutral act. It is a competitive concession.

When a beauty brand cites a Mintel report on consumer skincare habits, and a direct competitor cites the same Mintel report in their next campaign, both brands have used research to confirm the same observation. The category insight exists. The credibility claim cancels out. Neither brand has said anything the other couldn't say tomorrow.

This is the borrowed research trap. It feels rigorous - the data is real, the source is credible - but it does nothing strategically. The brand appears informed, not authoritative. There is a meaningful difference between those two positions.

The test is straightforward. Before publishing any piece of branded content, ask: could our primary competitor publish this tomorrow? If the data is publicly available, the answer is yes. And if yes, the content is confirming category membership, not building category standing.

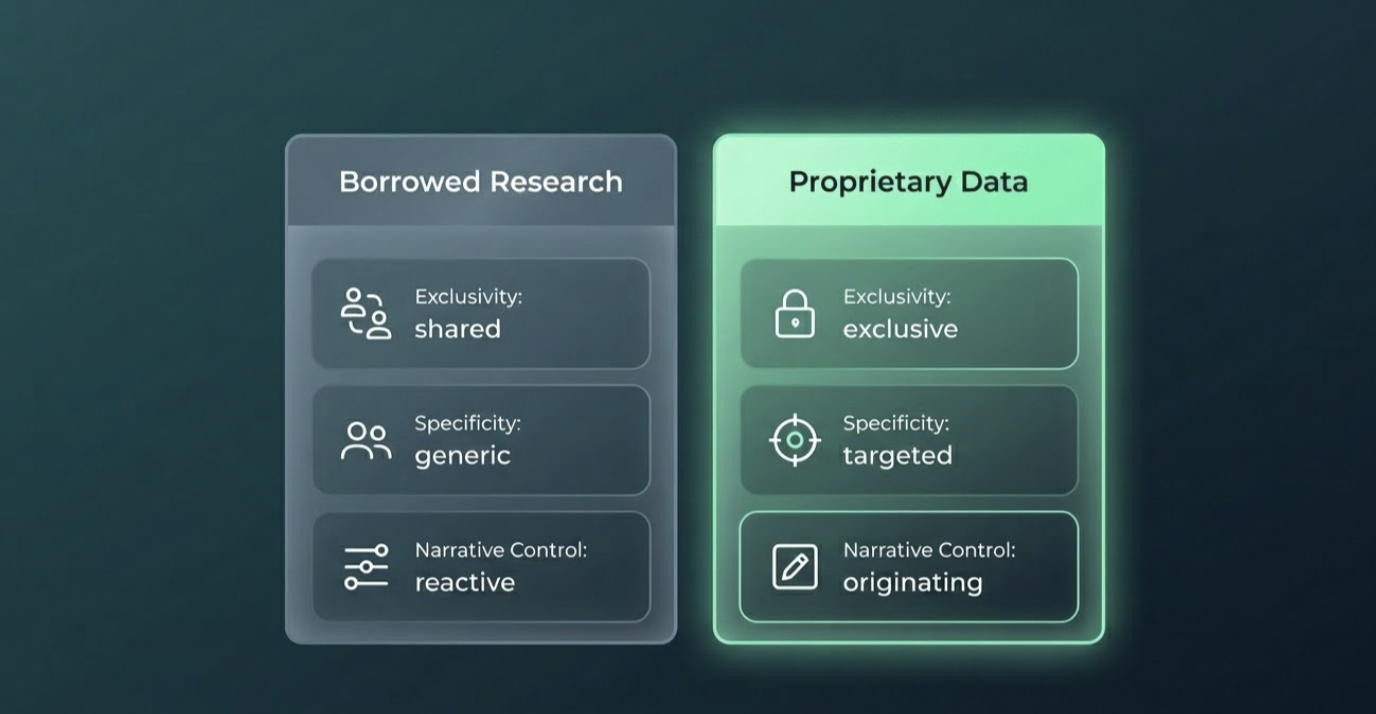

Borrowed research confirms category membership. Proprietary data creates category standing.

The problem compounds in categories where multiple brands are fighting for the same positioning territory. Every challenger brand claiming to understand "the modern conscious shopper" is working from the same behavioral data sets. The findings are accurate. They are also available to every competitor in the segment at the cost of a syndicated subscription. No brand that builds its thought leadership on shared data is saying something only it can say.

What Proprietary Data Actually Gives You That Syndicated Research Doesn't

There are three specific advantages to owning your data. Each does distinct strategic work.

Exclusivity. When a brand commissions a nationally representative consumer survey, the findings belong to that brand. No competitor can cite them. No agency can pull them from a shared database. The argument is not replicable. The competitor cannot match it without commissioning their own study, and by that point, the brand has already set the terms of the conversation.

Specificity. Syndicated research answers the questions the research firm decided to ask. Proprietary research answers the questions your strategy needs answered. A mid-size beauty brand trying to understand whether its core customer distinguishes between clean and clinical formulations will not find that answer in a Mintel beauty report. The category is defined too broadly; the segment is not surfaced; the question was never asked. Commission the survey, define the audience, write the question - and the finding maps directly onto the positioning decision, the campaign brief, the board deck.

Narrative control. Whoever asks the question controls the conversation. A brand that publishes the first nationally representative data on how US consumers define value in the skincare category has named the terms. The category is now discussing a question this brand originated. Competitors who respond are, by definition, reacting to a frame someone else established. That is not a marginal advantage. Category conversations have long memories - the brand that surfaced an insight first is cited as the source long after the original publication.

Two brands have built content authority models on exactly this logic. NerdWallet maintains a dedicated data hub, commissions nationally representative surveys through The Harris Poll, and distributes findings directly to the Associated Press and over 1,600 media outlets - each survey producing a citable number no competitor in personal finance can replicate. Morning Consult operates on the same principle at scale: proprietary polling as the product, published continuously, making Morning Consult the origin point for category conversations rather than a participant in them. The mechanism is identical in both cases. Ask the question. Own the finding. The category comes to you.

The same mechanism is available to any brand in any B2C category - beauty, grocery, financial services, retail - willing to ask the question their competitors haven't asked.

How to Commission a Consumer Survey Your Content Team Can Actually Use

Traditional research firms operate on timelines of six to eight weeks from brief to deliverable. That timeline is the primary reason most brands default to syndicated data for their content programmes. Here is the operational alternative.

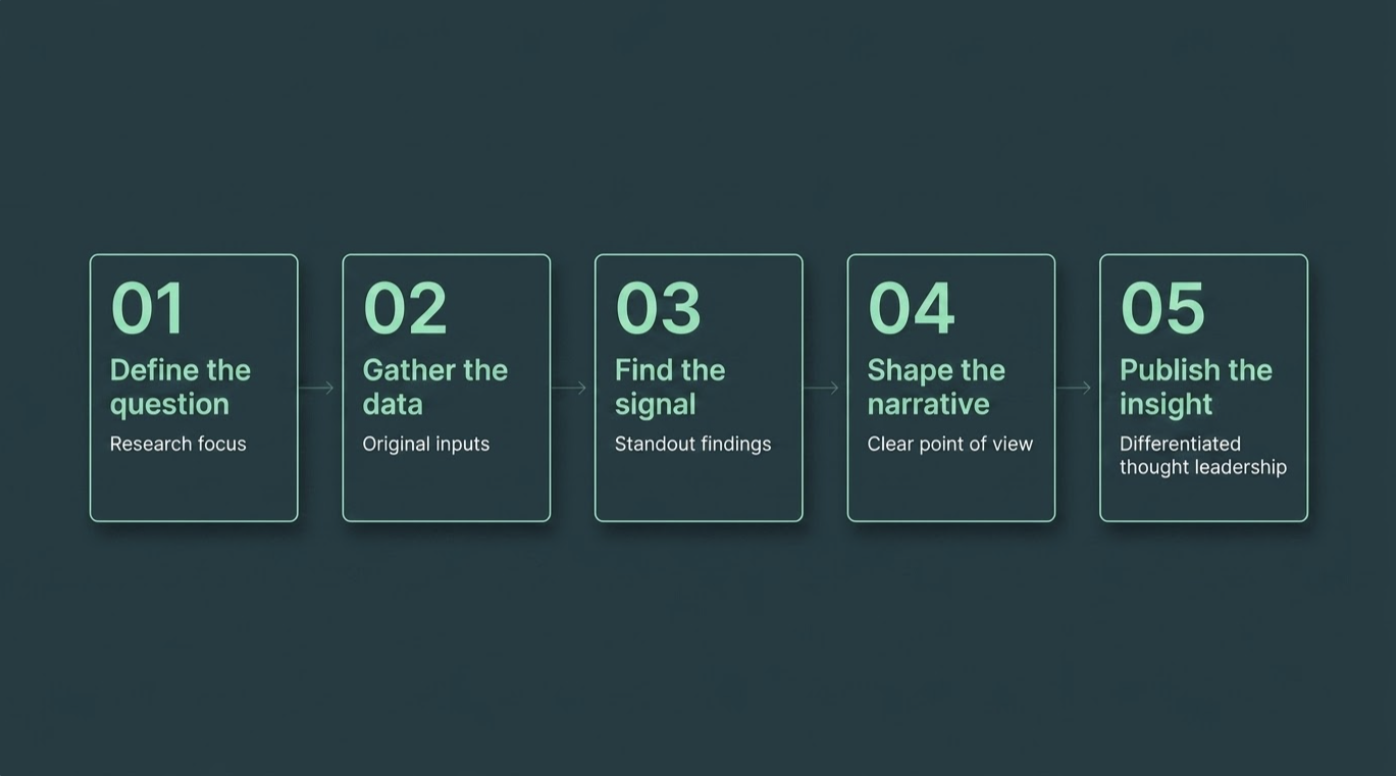

Step 1: Define the strategic question.

Output: one sentence that the survey will answer.

This is not the title of the report. It is the specific, testable claim the brand needs to validate or challenge. Do US grocery shoppers trust on-pack sustainability claims? is a strategic question. What do consumers think about sustainability? is not. A well-scoped question has a right answer and a wrong answer, and the brand has a hypothesis about which is true. The survey exists to pressure-test that hypothesis against real data. A well-scoped survey brief is one page: one research question, three to five hypotheses, and a named audience segment.

Step 2: Define the audience and sample.

Output: a named audience segment with a specific N and a rationale for both.

For most B2C content use cases, a nationally representative sample of 500 US adults — or 500 adults in a named category such as primary grocery shoppers, skincare buyers, or financial product holders — is the right starting size. It is large enough to produce findings that hold at the category level, specific enough to be credible in a content claim, and small enough to field quickly. Audience definition is the variable that most commonly undermines otherwise well-designed surveys: a sample too broad produces findings that sound like common sense; a sample too narrow produces findings that cannot be generalised. The right audience is the audience whose behaviour the brand's strategy actually depends on.

One note: segment-level design — weighting findings by demographic, running cross-tabulations across age or income bands — adds analytical complexity that belongs in a separate conversation. For a first survey-backed content asset, one audience, one question, clean findings.

Step 3: Design the survey instrument.

Output: a survey of 10 to 15 questions, structured around the strategic question, with clear answer choices.

The governing principle: the answer to the strategic question should be expressible as a single data point — ideally a percentage — that can stand alone as a headline claim. Supporting questions build context. They explain why respondents answered as they did, identify sub-patterns, and produce the secondary findings that give a report depth beyond its headline. Every question that does not contribute to answering the strategic question or explaining it should be cut.

Step 4: Field the survey.

Output: clean, nationally representative response data.

This is where panel access and methodology determine whether the data is credible. A well-designed survey fielded to an unqualified panel produces numbers that look authoritative and hold up to no scrutiny. Standard Insights fields surveys across its 170M+ global panel, with US nationally representative samples of 500 consumers delivered in 24 hours. The credibility of the data — the thing that makes a brand's content citable rather than dismissible — rests entirely on the rigour of the sampling process.

Step 5: Interpret the findings and build the content asset.

Output: a published report, article, or press-ready data release built around a single headline finding.

The finding is not the data. The finding is the strategic implication of the data, stated as a verdict. 53% of US grocery shoppers say they have abandoned a brand after discovering a misleading sustainability claim is data. Sustainability theatre is now a churn driver in the grocery category is the finding — the sentence that earns media coverage, shapes a retailer conversation, and becomes the headline of the content asset. The brand's strategic point of view is what turns raw survey output into thought leadership. The data provides the authority; the interpretation provides the claim.

You Have the Report. Now What?

A published finding with no distribution plan is a press release no one sent. The survey does not build authority by existing — it builds authority by being placed where the right people encounter it.

The same dataset typically supports four distinct content formats, each serving a different part of the ICP's decision journey. The headline finding becomes a press release or media pitch — this is the NerdWallet model, and it works because journalists need data they can cite exclusively. The full report becomes a gated asset, exchanging depth for contact details from the exact audience the brand is trying to reach. A shorter editorial cut — one finding, one implication, one recommendation — goes into the brand's owned channels: newsletter, LinkedIn, sales collateral. And the raw data, or a selected cut of it, goes to the sales team as a conversation opener: we surveyed 500 of your customers and here's what they told us is a more effective opening than any product one-pager.

None of this requires a separate content operation. It requires deciding, before the survey goes to field, which formats the data will feed and writing the survey instrument with those outputs in mind. That is a brief conversation at Step 1. It determines whether a single 500-person survey produces one piece of content or six.

The survey design question and the distribution question are not sequential. They are the same question asked twice.

The Brands That Own the Conversation Are the Brands That Owned the Question

Between 2023 and 2024, the share of marketers creating blog content without AI involvement dropped from 65% to 5%. That is not a gradual shift. It is a structural change in the economics of content production — and its primary effect on brand thought leadership is not speed or volume but homogeneity. When synthesis and summarisation are available at negligible cost, secondhand analysis becomes the default mode of brand publishing. The content looks credible. It references real sources. It reaches accurate conclusions. It is also, in most cases, indistinguishable from the fifty other pieces published on the same topic by competing brands the same week.

There is exactly one form of content that is structurally immune to the homogeneity problem: a finding you commissioned, from a sample you defined, answering a question you set. That exclusivity is the only durable content advantage available right now.

The question every brand should ask before publishing any piece of thought leadership is not is this well-written? It is not is this timely? It is: could our primary competitor publish this tomorrow?

If yes — if the supporting data is publicly available, if the argument depends on shared industry findings, if the conclusion could appear under a different brand's logo without modification — the content is not building authority. It is confirming category membership.

Commission the survey. Define the question your category hasn't asked. Publish the finding only you have.

About the data: Standard Insights conducts nationally representative surveys across its 170M+ global panel. All third-party statistics cited in this article are sourced as attributed. No Standard Insights survey data is cited in this piece; the article references published findings from the 2024 Edelman-LinkedIn B2B Thought Leadership Impact Report and HubSpot's 2024 State of Marketing.

Editorial footer note: unresolved internal link gaps remain for nationally representative consumer survey, a finding no competitor can replicate, survey instrument, and The Modern Strategist. Web team should connect these only when real destination pages exist.